Navigating the AI Age: What the EU AI Act and Global Regulations Mean for Your Business

The digital landscape is electrifying, innovation is exploding, and AI is at the heart of it all. But with unprecedented power comes unprecedented responsibility. Across the globe, a new era of AI governance is dawning, fundamentally reshaping how developers build and businesses deploy this transformative technology.

For years, the development of Artificial Intelligence felt like the Wild West — a frontier of boundless possibilities with few rules. Now, the sheriffs are in town. The EU AI Act, the world’s first comprehensive AI legislation, is setting a precedent that ripples far beyond Europe’s borders. Coupled with emerging frameworks from the US, UK, and Asia, developers and businesses are entering a new phase where ethical considerations and compliance are not just buzzwords, but cornerstones of success.

The EU AI Act: Your New AI Compass

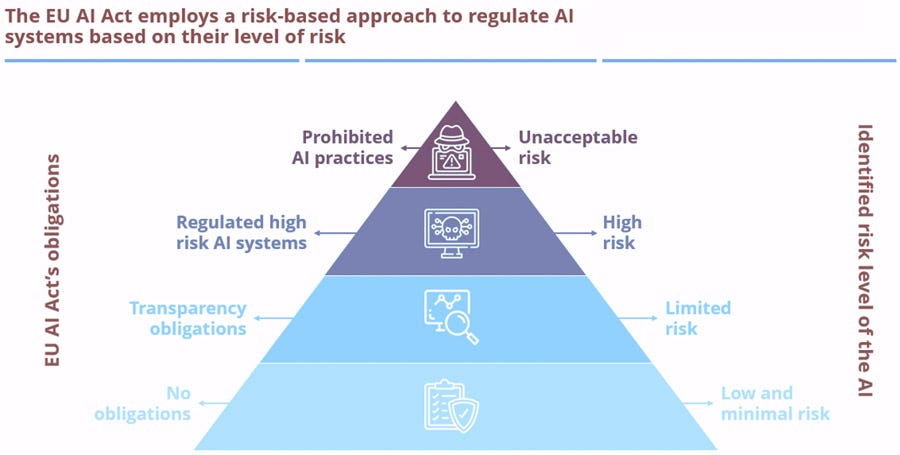

The EU AI Act isn’t a blanket ban; it’s a meticulously crafted, risk-based framework designed to foster responsible innovation. It categorizes AI systems into four distinct risk levels, each with varying degrees of scrutiny:

- Unacceptable Risk (Prohibited): Think dystopian scenarios like social scoring, manipulative AI, or real-time public biometric identification (with very narrow exceptions). These are out, full stop.

- High Risk: This is where the rubber meets the road for many businesses. AI in critical sectors like healthcare, law enforcement, employment, education, and essential infrastructure falls here. If your AI system could significantly impact fundamental rights or safety, prepare for rigorous obligations. This includes:

- Robust Risk Management: Continuous identification and mitigation of risks throughout the AI’s lifecycle.

- High-Quality Data: Ensuring your training data is clean, unbiased, and representative — a critical step in preventing algorithmic discrimination.

- Transparency & Human Oversight: Designing systems that can be explained, understood, and where humans can intervene effectively.

- Technical Documentation & Registration: Comprehensive records of your AI model and its performance, and registration in a public EU database.

- Limited Risk: Chatbots and deepfakes fall here. The primary obligation? Transparency. Users need to know they’re interacting with an AI or that content is AI-generated.

- Minimal or No Risk: The vast majority of AI, like spam filters or video game AI, will face minimal regulatory hurdles.

The catch? Its reach is global. If your business operates within the EU, or if your AI output impacts EU citizens, this Act applies to you, regardless of where your servers are located. Non-compliance isn’t just a slap on the wrist; we’re talking about fines up to €35 million or 7% of global annual turnover.

Beyond Europe: A Patchwork of Global Approaches

While the EU leads, other nations are charting their own courses:

- United States: A more fragmented landscape with executive orders, potential federal laws, and state-specific regulations. The emphasis is often on data privacy, accountability, and the NIST AI Risk Management Framework (AI RMF), a voluntary but influential guide.

- United Kingdom: A sector-specific, pro-innovation approach, leveraging existing regulators and establishing an AI Authority.

- Asia: Countries like India and Singapore are actively developing their own principles and frameworks for responsible AI, often aligning with global ethics while focusing on local nuances.

This diverse regulatory environment means businesses operating internationally will need a sophisticated understanding of compliance to navigate this complex web.

The Win-Win: Responsible AI as a Strategic Advantage

Some might fear that regulation stifles innovation, but the truth is often the opposite. By embedding responsibility into your AI strategy, you don’t just avoid hefty fines; you build a competitive edge:

- Enhanced Trust: Demonstrating compliance fosters confidence among customers, partners, and investors. In an age where data privacy and ethical AI are paramount, trust translates directly into market share.

- Reduced Risk: Proactive compliance minimizes legal, reputational, and operational risks, ensuring your AI systems are robust, fair, and secure.

- Market Access: Adhering to the EU AI Act opens doors to one of the world’s largest and most discerning digital markets.

- Sustainable Innovation: Building responsible AI from the ground up ensures long-term viability and aligns with societal values, attracting top talent and fostering a positive brand image.

Your Action Plan: Don’t Get Left Behind

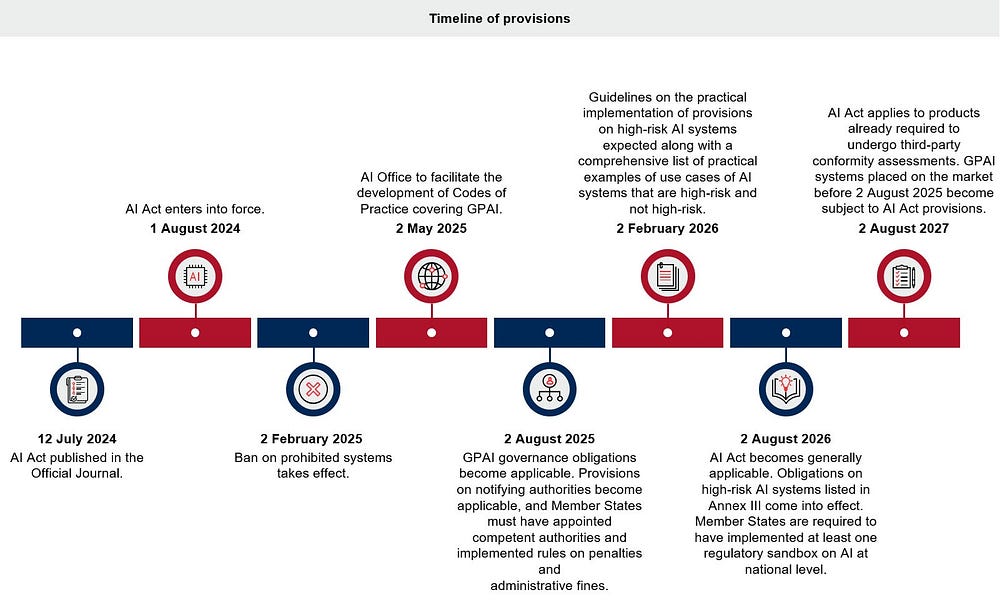

The clock is ticking, with some provisions already in force and others rapidly approaching. Here’s what developers and businesses need to be doing now:

- Inventory & Classify: Understand every AI system you use or develop and categorize its risk level under relevant regulations.

- Audit Your Data: Scrutinize your training data for biases, ensure its quality, and verify ethical sourcing and consent.

- Document Everything: Create comprehensive technical documentation for all your AI models, from development to deployment.

- Embrace Transparency & Explainability: Design your AI with clear human oversight mechanisms and ensure its decisions can be understood and explained.

- Build a Culture of Responsibility: Foster ethical AI practices across your organization, from engineers to leadership.

- Seek Expertise: Engage legal and compliance professionals to navigate the nuances of global AI regulations.

The AI revolution isn’t just about technological prowess anymore; it’s about building a future where AI is powerful, beneficial, and, above all, responsible. By proactively engaging with these new regulations, developers and businesses aren’t just adapting; they’re shaping the ethical backbone of the next generation of AI, securing a brighter, more trustworthy digital future for everyone.

Sources: digital-strategy.ec.europa.eu, consultancy.eu, wikipedia.com, insightplus.bakermckenzie.com, datamatics.com

Authored By: Shorya Bisht

No comments:

Post a Comment